DigiHuman

Real-time markerless mocap pipeline. BlazePose + MediaPipe drive a Unity humanoid; PIFuHD + RigNet turn one photo into a rigged 3D character.

MSc Computer Science at York University (Toronto, Ontario), focused on VR — facial expression and motion capture for 3D virtual characters with Unity3D, Unreal Engine, and ARKit, bridging virtual and real-world interaction. I build hybrid systems where ML, real-time graphics, and game engines meet: mocap pipelines, AI-driven engines, VR training, and LLM systems.

Selected work across game development, ML, computer vision, and systems.

Real-time markerless mocap pipeline. BlazePose + MediaPipe drive a Unity humanoid; PIFuHD + RigNet turn one photo into a rigged 3D character.

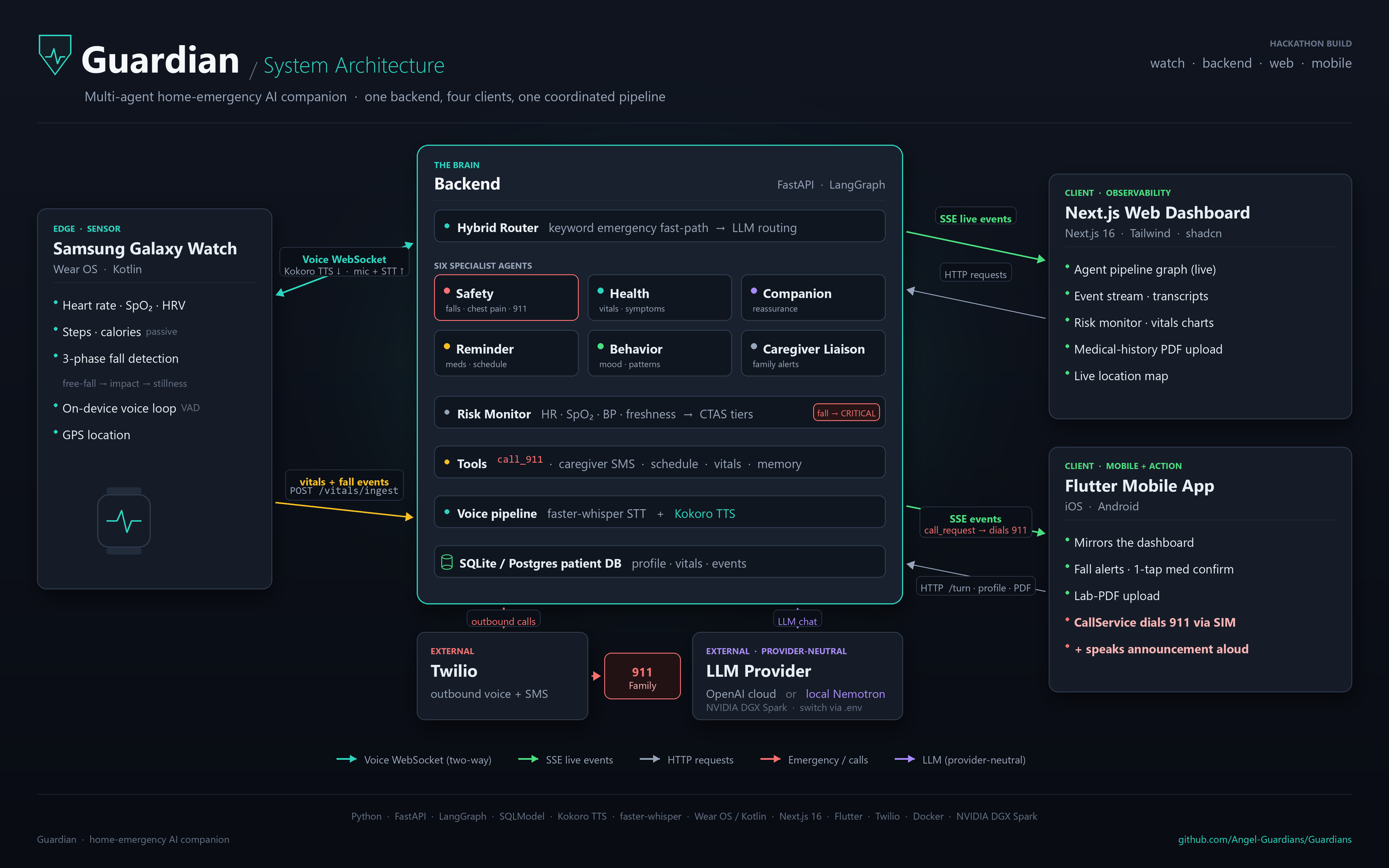

Local-first emergency AI companion for people who live alone. A Wear OS watch detects falls; a six-agent LangGraph backend calls 911 with full medical context and alerts family — all running on an NVIDIA DGX Spark.

AI game engine that procedurally generates 3D worlds from text prompts. Built the wall-cutout system, cinematic camera, and CI/CD.

GameCraft 2025 jam demo — narrative experience inside the mind of a character with paranoia disorder. Mood, perception shifts, trust mechanics.

Strategic multiplayer card game. Reinforcement-learning AI opponent; Photon + PlayFab for online play.

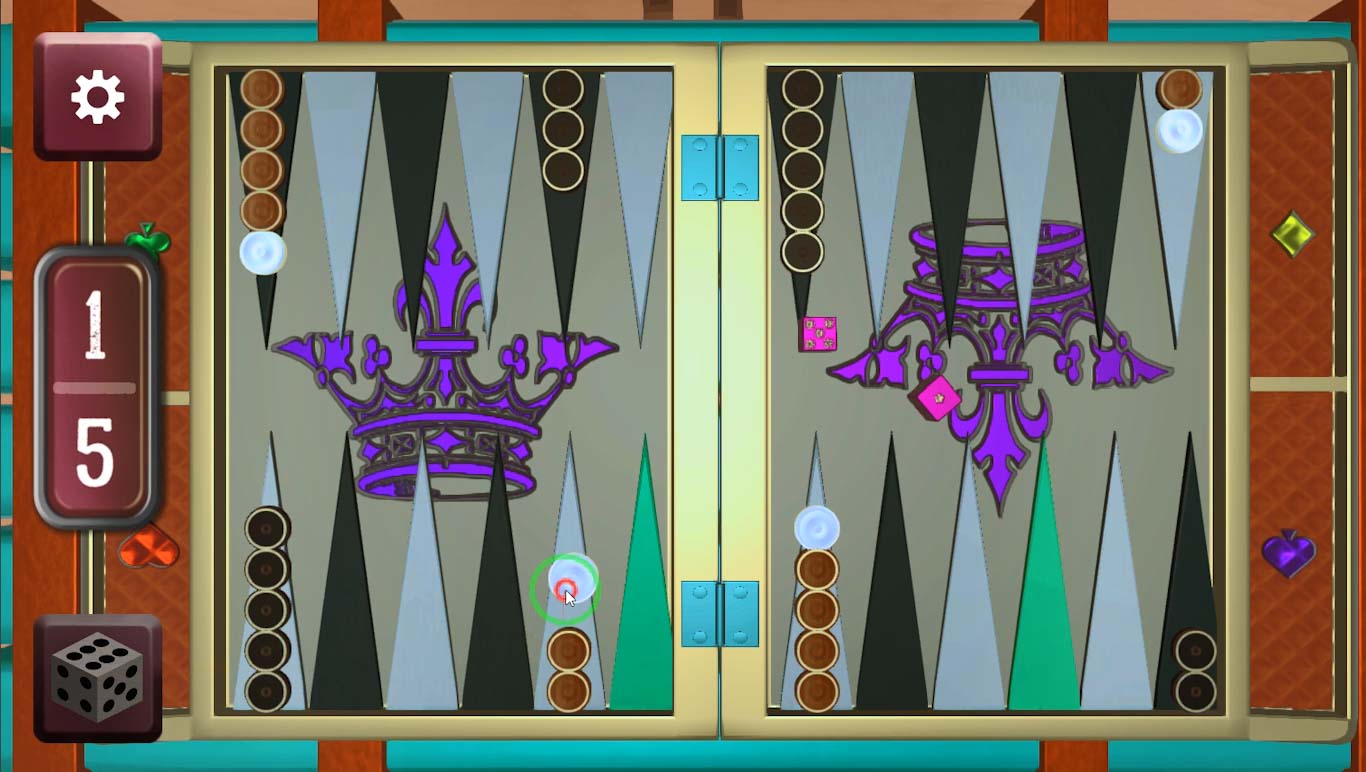

Turn-based multiplayer with offline + AI modes. Monte Carlo tree search drives AI decisions; Photon manages the online platform.

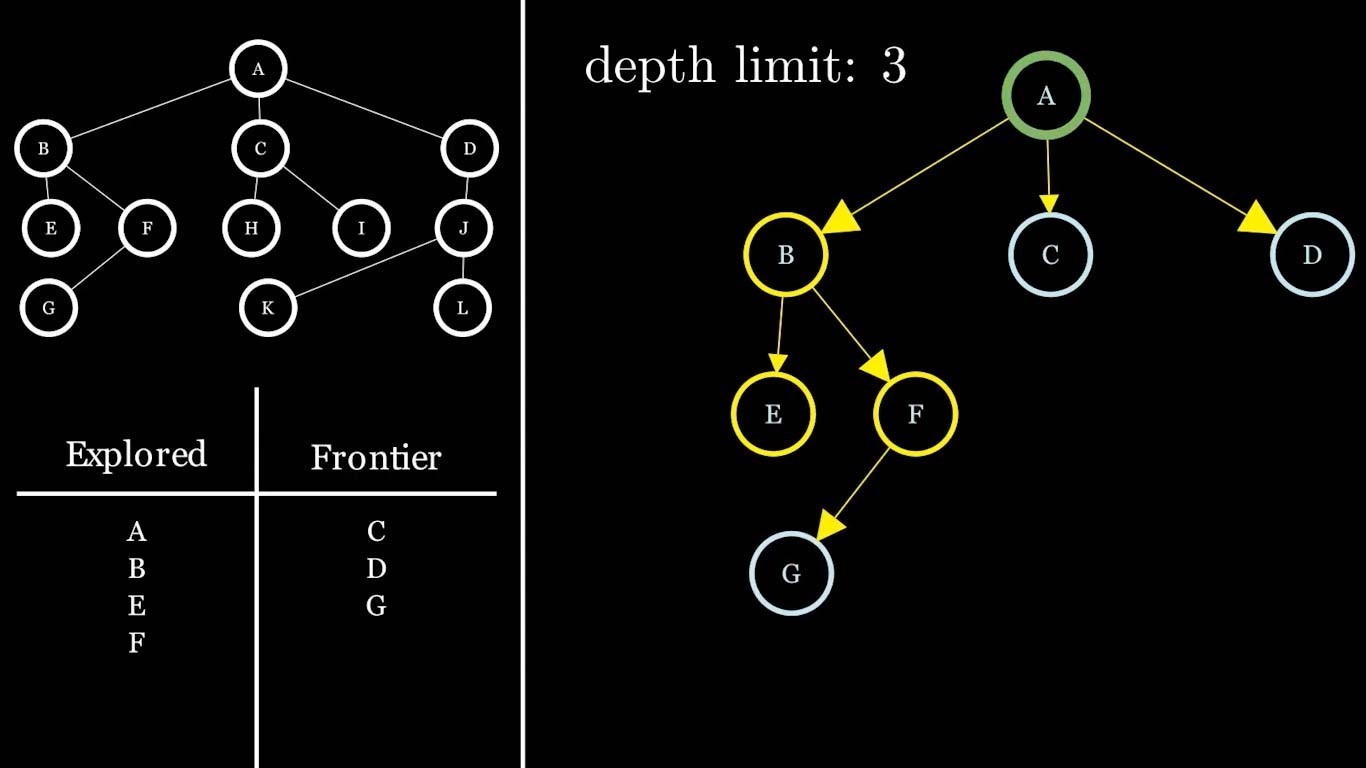

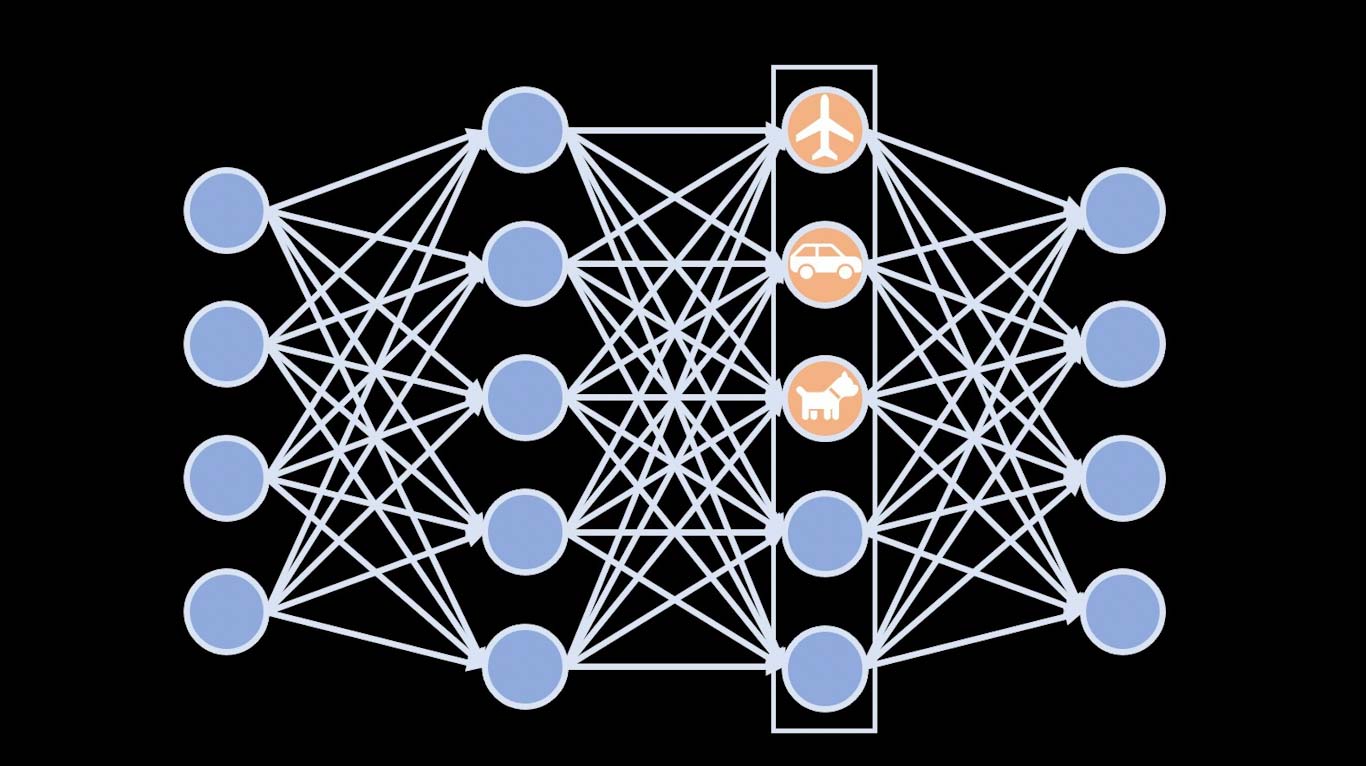

Visual content created during my AI-course teaching assistant role. Animations published on YouTube to explain core concepts.

Information-retrieval course project — built a search engine from scratch. KNN classification, K-means preprocessing, tf-idf, champion lists.

NLP project guessing the author of a poem from text. Bigram + unigram language models reach 86% accuracy.

Real-time solar-system simulation written in raw OpenGL. Orbital mechanics, lighting, textured planets.

Panoramic image-stitching app for seamless 360° images. Feature detection + matching with OpenCV; intuitive upload UI.

Course-based DL learning track — hand-digit recognition, MNIST GANs, cat/dog classifiers — at both low (NumPy) and high (Keras/TF) levels.

URL-shortener service in Java + MySQL. Containerized with Docker and orchestrated on Kubernetes for concurrent multi-instance ops.

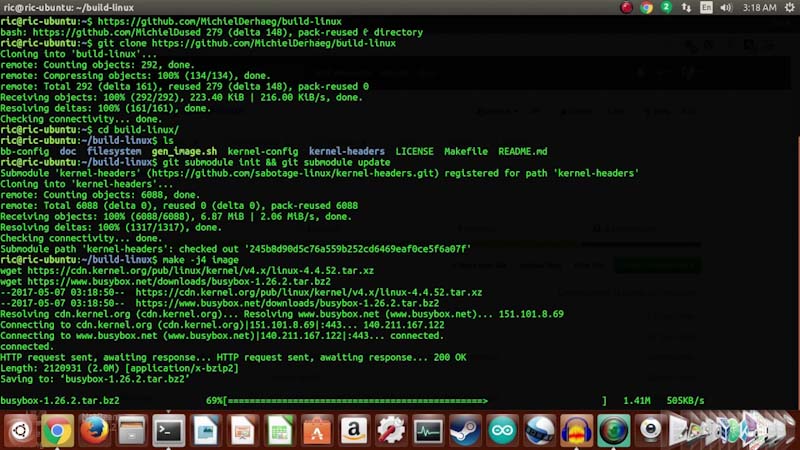

Modified the XV6 OS — added syscalls, swapped CPU scheduling algorithms, and customized kernel behavior.

Hyper-casual iOS game using 3D mesh pathfinding for smooth on-mesh movement.

First game I ever shipped — a multiplayer P2P tank game in pure Java. Final project for advanced programming.

My very first programming project. The C-language Tower of Hanoi that lit the spark for everything since.

Roles where I shipped — research labs, startups, game studios, university teaching.

Partnering with DXTR to develop an MVP for a real-time VR firefighter training system, focusing on skill deviation detection using headset telemetry.

Core contributor to DreamForge, an AI-driven game engine that procedurally generates complete 3D game worlds from text prompts.

VR and motion-capture projects using Meta Quest, ARKit, Unity3D, and Unreal Engine.

Simulation systems for high-traffic environments such as airports, train stations, and malls using Unity3D.

Led development of Techu, a strategic card game — gameplay, AI, and multiplayer integrations.

Volunteer full-stack developer for IAESTE (International Association for the Exchange of Students for Technical Experience).

Technical staff for AUT Game Development Events for two consecutive years — organizing technical aspects, content, and tutoring — plus teaching assistant roles across several courses.

Internship at Sepantab, an IoT startup, during the first summer after the pandemic began. Built an online mobile game for café customers to use while waiting for orders — simple engagement and light rewards while orders are prepared.

Game developer on a small team shipping hyper-casual and adventure games for iOS, Android, and Windows.

Master's work at the intersection of virtual reality and telecommunication: facial expression and motion capture on 3D virtual characters. Leverages Unity3D, Unreal Engine, and ARKit to bridge virtual and real-world interaction — pushing C# and C++ depth for game development and XR.

Early focus on game development; after an AI course, deepened into generative models via Stanford and Coursera (GANs, deep networks) during the pandemic. Fascinated by generative models in computer vision. Thesis (eight months): pose estimation, automatic 3D animation generation, and 3D rigged character mesh generation.

Completed in NODET (National Organization for Development of Exceptional Talents). Robotics workshops with open-weight football robots; competed at RoboCup and placed 4th of 32 teams.

Funding, competitions, scholarships, and conference presentations.

Interested in collaborating, or do you want to get in touch? I'm glad to hear from you.